AI tools for beam eye tracker cracked

Related Tools:

Beam AI

Beam AI is a leading platform for Agentic Automation and AI Agents, offering solutions for automating manual workflows with AI agents to boost productivity. The platform is used by Fortune 500 companies and scale-ups, providing a seamless experience from start to finish. With features like pre-trained agents, integrations, and easy-to-navigate UI, Beam AI empowers users to create and deploy AI tools tailored to their specific needs. The platform is designed to scale any business, offering industry-specific solutions and world-class support for building AI-native organizations.

Beam AI

Beam AI is the #1 end-to-end automated takeoff software designed for General Contractors, Subcontractors, and Suppliers in the construction industry. It leverages cutting-edge Artificial Intelligence technology to provide accurate and fast quantity takeoffs for various trades, saving up to 90% of the time typically spent on manual takeoffs. With Beam AI, users can streamline their bidding process, send out more estimates, and focus on value engineering to build competitive estimates. The software offers features such as cloud-based collaboration, 100% done-for-you quantity takeoffs, auto-detection of spec details, and the ability to process multiple takeoffs in parallel.

Qlerify

Qlerify is an AI-powered software modeling tool that helps digital transformation teams accelerate the digitalization of enterprise business processes. It allows users to quickly create workflows with AI, generate source code in minutes, and reuse actionable models in various formats. Qlerify supports powerful frameworks like Event Storming, Domain Driven Design, and Business Process Modeling, providing a user-friendly interface for collaborative modeling.

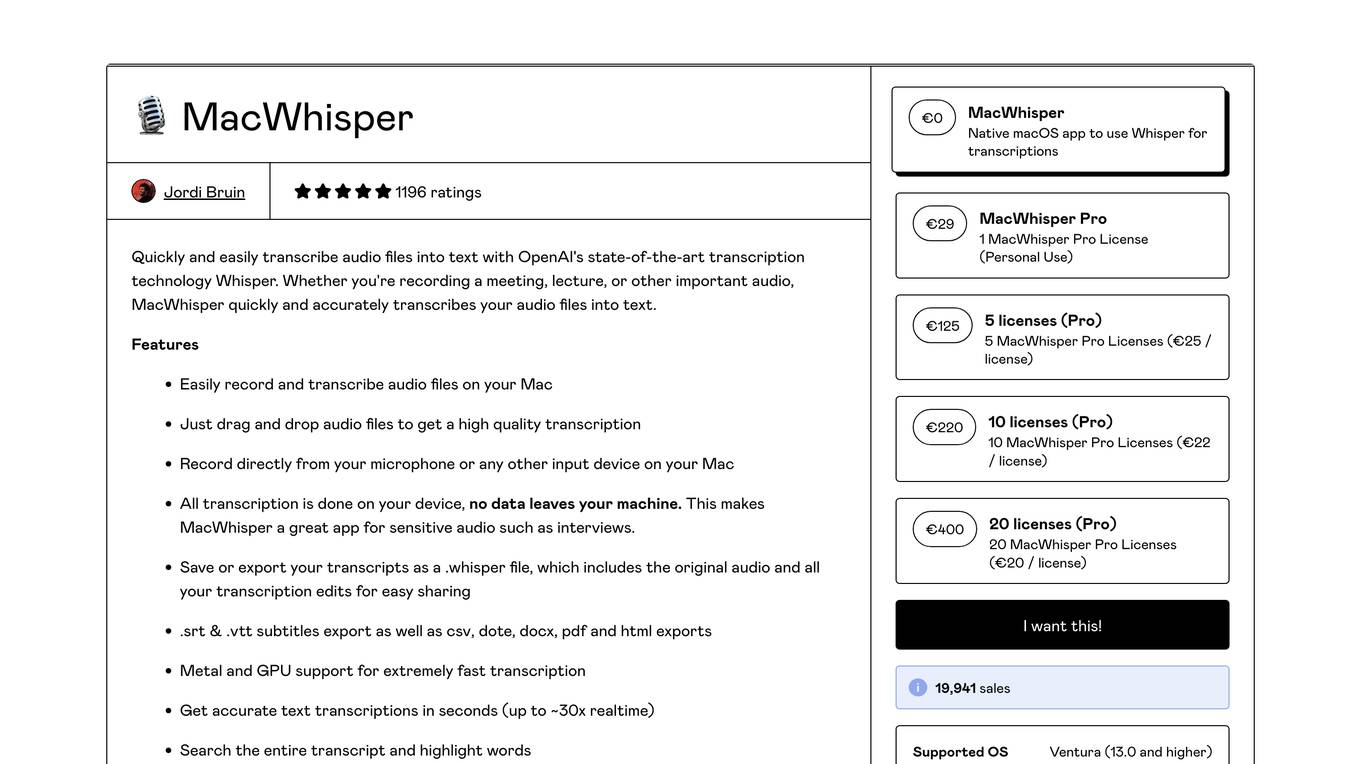

MacWhisper

MacWhisper is a native macOS application that utilizes OpenAI's Whisper technology for transcribing audio files into text. It offers a user-friendly interface for recording, transcribing, and editing audio, making it suitable for various use cases such as transcribing meetings, lectures, interviews, and podcasts. The application is designed to protect user privacy by performing all transcriptions locally on the device, ensuring that no data leaves the user's machine.

Beam Eye Tracker Extension Copilot

Build extensions using the Eyeware Beam eye tracking SDK

Beam-Dyer Operator Assistant

Hello I'm Beam-Dyer Operator Assistant! What would you like help with today?